Marketing Strategy Tools: Why a Toolbox Is Not a Strategy

I've spent twenty years building marketing strategy. Inside agencies. Inside companies. Inside the classroom at Solvay Brussels School. Across that time, I've used every tool you'd find in a "marketing strategy frameworks" listicle — and most of the ones you wouldn't.

SWOT. Porter's Five Forces. The BCG Matrix. The Ansoff Matrix. Customer Journey Maps. The Brand Identity Prism. Keller's Brand Equity Pyramid. The Balanced Scorecard. The Business Model Canvas. Perceptual Maps. Hooley's Segment Attractiveness Framework. The Marketing Mix in all its mutations — 4Ps, 7Ps, 5Cs.

Each one illuminates something real. None of them, on their own or stacked together, builds a strategy.

If you've read a roundup article that promises "14 essential marketing strategy tools," you already have the toolbox. You may have noticed the toolbox isn't enough. This article explains why — and what an actual marketing operating system looks like.

What the standard marketing strategy tools do well

Let's give the standard tools their due before examining where they fall short.

SWOT forces you to confront strengths, weaknesses, opportunities, and threats in a single frame. It's a useful warm-up — a way to surface assumptions before you commit to a direction.

Porter's Five Forces disciplines your view of the industry. Suppliers, buyers, rivalry, substitutes, new entrants. Run it well and you stop confusing "tough market" with "competitive structure."

The BCG Matrix organizes a portfolio. Stars, cash cows, dogs, question marks. Decide where to invest, where to harvest, where to divest.

The Ansoff Matrix names the four growth paths: penetrate the market you have, develop new markets, develop new products, or diversify. Useful for keeping management honest about which game they're playing.

The Customer Journey Map traces touchpoints from awareness to advocacy. It catches breakdowns that the org chart hides.

The Brand Identity Prism and Keller's Brand Equity Pyramid push brand thinking past logos and taglines into something operationally meaningful.

The Balanced Scorecard balances financial metrics against customer, process, and learning measures. Without it, finance wins every quarterly review by default.

Every one of these tools is real. Every one solves a specific diagnostic problem. I still use most of them.

The issue isn't the tools. It's what happens when you have fourteen of them and no operating system.

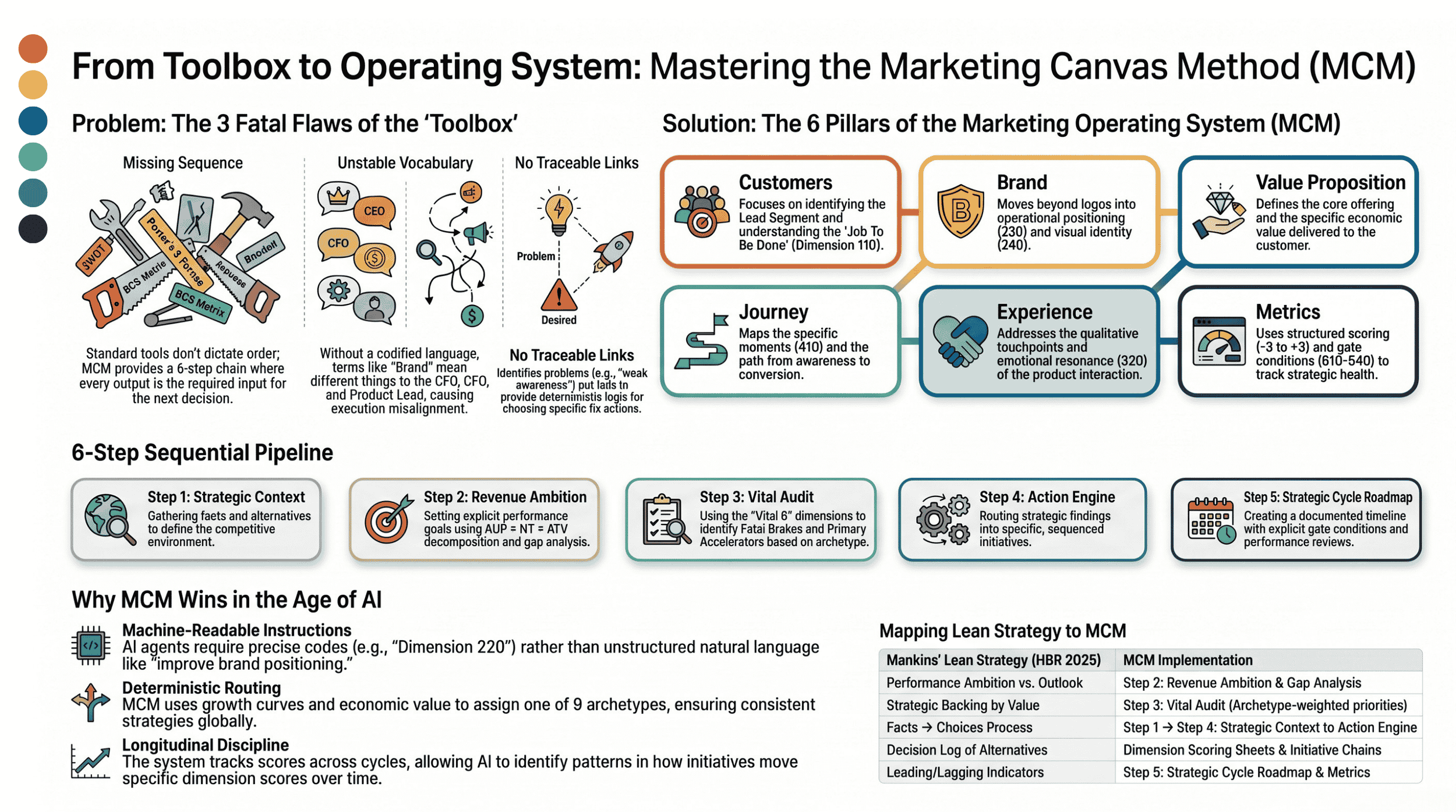

Three problems the toolbox can't solve

1. The vocabulary is unstable

You've sat in this meeting. Someone says, "We need to improve the brand."

The CFO hears "brand awareness." The product lead hears "perceived quality." The agency hears "tone of voice." The CEO hears "valuation." Five executives leave the room with five different mandates, each one confident they heard the instruction correctly.

They didn't.

Marketing doesn't have a precise shared vocabulary. Every tool defines its core terms slightly differently. The SWOT framework's "strength" isn't the same as the BCG matrix's "market share advantage." The Brand Identity Prism's "personality" isn't the same as Keller's "brand resonance." The customer in your Journey Map isn't necessarily the same customer your Segmentation Chart targets.

Compare this with finance. When the CFO says "EBITDA," nobody asks for clarification. The term is codified. Two CFOs in two countries mean exactly the same thing.

Some of the most sophisticated marketing organizations have tried to solve this internally. The Marketing2020 study published in HBR in 2014 by Marc de Swaan Arons, Frank van den Driest, and then-Unilever CMO Keith Weed surveyed over 10,000 marketers across 92 countries and found that companies like Coca-Cola, Unilever, and Shiseido had invested in dedicated internal marketing academies for one specific reason: to create a single marketing language and a consistent way of doing marketing across their organizations. The academies work — for the company that owns one. They don't help anyone else. And they aren't machine-readable: the language they create lives in slide decks, training programs, and tribal knowledge, not in coded dimensions an AI agent can act on.

When marketers say "brand," everyone has to decide what they mean — and most teams skip the step.

2. The sequence is missing

Open any marketing strategy guide and you'll find the same fourteen tools, grouped under labels like "Define," "Analyze," "Plan," and "Execute."

Now ask: in what order? At what cadence? What does the SWOT output feed? Which tool's findings determine which other tool runs next? When does Customer Journey Mapping happen — before or after positioning? Does the Brand Identity Prism precede or follow segment selection?

The honest answer: the listicle doesn't say. Most marketing strategy guides treat the tools as a buffet. Take what you like. Skip what you don't. Build the slide deck around whichever ones have nice templates.

This is the core difference between a framework and a method. A framework gives you a shape to fill in. A method gives you a sequence — a chain of decisions where each output is the next step's required input.

Without sequence, the strategy isn't reproducible. Two qualified marketers, given the same data, will produce two different strategies because they ran the tools in different orders. That's not a profession. That's an art form pretending to be an engineering discipline.

3. There's no traceable link from diagnosis to action

This is the deepest problem.

Run a SWOT. You identify a weakness — say, "limited brand awareness in the mid-market segment." Now what?

Do you launch a campaign? Hire an agency? Rebuild the website? Sponsor a podcast? Each option costs money. Each takes management attention. Each forecloses other options. The SWOT doesn't tell you which one to pick. Neither does Porter's. Neither does the BCG Matrix.

The board asks the inevitable question: "Why are we doing this initiative and not that other one?" The honest answer is usually some combination of "the agency suggested it," "we did it last year," and "the team feels strongly about it."

That's not a strategy. That's a budget defended by sentiment.

In finance, every line item traces to an account. Every forecast traces to auditable assumptions. A new CFO can pick up the prior year's plan and reconstruct exactly why each commitment was made. In marketing, the strategy traces to a meeting where senior people agreed it felt right.

When the budget gets cut, the team can't defend the choices — because the choices were never connected to evidence in a way someone outside the room could audit.

The wider strategy world is reaching the same conclusion

If this argument felt fringe a decade ago, it doesn't anymore.

In the May–June 2025 issue of Harvard Business Review, Bain partner Michael Mankins published a piece called "Lean Strategy Making." His thesis: strategic decision-making at most large companies is the worst kind of bespoke work — every decision treated as unique, no two run the same way, with predictable consequences.

The numbers from Bain's survey of 350 large companies are uncomfortable.

Roughly half had standard templates for strategic plans, but very few followed a consistent process for the actual decisions. About a quarter of strategic decisions were judged suboptimal in hindsight. Nearly half were too slow. On average, companies achieved less than 70% of what their strategies aimed for.

Mankins makes the comparison directly: if a manufacturing line ran at those defect and cycle-time rates, leadership would shut it down. Yet boardrooms tolerate the same inefficiency in strategy itself.

His prescription — what he calls lean strategy making — has three components. Setting priorities through an explicit gap between an aspirational performance ambition and a realistic multi-year outlook of where the current strategy is actually heading. Making ongoing decisions through a standardized two-session process (a facts-and-alternatives session, then a choices-and-commitments session) backed by an explicit decision log. Monitoring through performance reviews that use both leading and lagging indicators, with the discipline to revisit decisions when the underlying facts change.

This is not a new argument inside Bain. In 2014, Bain partners Aditya Joshi and Eduardo Giménez published "Decision-Driven Marketing" in HBR, making the case specifically for marketing: campaigns that miss objectives, sales-marketing misalignment, and ROI-blind technology spending are fundamentally decision-making problems at the boundaries between marketing and adjacent functions like sales, IT, and finance. Their prescription mirrored what Mankins would later generalize — identify the critical decisions, clarify who owns each one, agree on the criteria, and build a standard process. The Target "strategy brief" they describe (a two-page template disciplining every major marketing initiative) is an early instance of exactly the structured decision artifact Mankins now calls a decision log.

Eleven years between the two pieces. Same firm. Same argument extended from marketing to general strategy.

But notice what neither prescription supplies: the substantive marketing content that fills the brief. Joshi and Giménez tell you to have decision criteria; they don't specify which dimensions of marketing those criteria should evaluate. Mankins tells you to compare ambition against a multi-year outlook; he doesn't tell you which marketing levers actually move the outlook. Both are correct about the meta-process. Neither is a marketing operating system.

This is exactly where the Marketing Canvas Method fits.

MCM is the marketing-specific implementation of what Mankins prescribes at the management level. Two practitioners, two domains, the same structural conclusion: strategy without standardization is craft, and craft doesn't scale. The parallels map cleanly:

| Mankins' Lean Strategy | MCM equivalent |

|---|---|

| Performance ambition vs. multi-year outlook | Step 2 — Revenue Ambition with explicit AOP × NT × ATV decomposition and gap analysis |

| Strategic backlog, prioritised by value at stake | Step 3 — Vital Audit producing archetype-weighted priorities (Fatal Brakes, Primary Accelerators) |

| Two-session decision process: facts → choices | Step 1 → Step 4 — Strategic Context feeding the Action Engine via deterministic archetype routing |

| Decision log of alternatives considered and chosen | Dimension scoring sheet plus traceable initiative-to-dimension chain |

| Performance reviews with leading and lagging indicators | Step 5 — Strategic Cycle Roadmap with gate conditions and Metrics dimensions (610–640) |

The HBR article is the case for standardization. MCM is the standard, applied to marketing. Read together, they describe the transition that's coming for marketing strategy — the same one manufacturing went through fifty years ago, from craft to discipline.

The AI test: can an agent execute your strategy?

This problem has always existed. It was manageable when humans were the only ones executing.

A talented CMO could hold the ambiguity in her head, translating between the CEO who said "grow revenue" and the creative director who said "let me tell a story." The CMO was the Rosetta Stone — the human translation layer that turned imprecise direction into coordinated execution.

That era is ending.

AI agents are increasingly part of how marketing gets done. Content drafts. Campaign analytics. Audience segmentation. Initial creative concepting. Some teams have moved further — agents drafting media plans, optimizing budget allocation across channels, generating positioning options, running competitive intelligence. The work that used to occupy junior strategists is now compressed into prompts.

The agents have one absolute requirement: they need a precise, structured instruction set.

This is where the standard marketing strategy tools break.

What AI does to the toolbox

Run a SWOT through ChatGPT. You'll get four lists of bullet points in thirty seconds. Faster than any consultant. The bullets will sound credible. They'll also be impossible to act on, impossible to compare across business units, and impossible to feed into the next decision. The SWOT was designed for a human to interpret in a boardroom — not for a machine to execute.

The same is true for most of the standard tools.

Porter's Five Forces outputs natural language descriptions of supplier power, buyer power, rivalry. An agent can summarize them. It cannot determine what you should do about them, because the framework never specified what "high supplier power" should trigger.

The BCG Matrix classifies products as stars, cash cows, dogs, or question marks. Useful labels. The labels don't carry budget allocation logic. An agent reading "this is a cash cow" has no rule for what comes next.

The Brand Identity Prism describes brand personality across six unstructured facets. An agent can generate text matching those facets. It can't tell you whether your current brand is two units away or four units away from where you need it to be — because there are no units.

The Customer Journey Map is a diagram. An agent can draw one. It cannot reason about which touchpoint to fix first when budget is constrained.

The tools fail the AI test for one structural reason: their outputs are unstructured natural language, and natural language doesn't compute. AI can produce more of these outputs faster — but more imprecise output isn't the same as a working strategy. Speed plus imprecision doesn't cancel out. It compounds.

The deeper shift: the firm itself is becoming an AI factory

The point goes beyond agents helping marketing teams move faster. It is structural.

In their 2019 book Competing in the Age of AI, Harvard Business School professors Marco Iansiti and Karim Lakhani argue that the firm of the future is being rebuilt around what they call an AI factory — a scalable decision-making engine at the company's operational core, powered by data pipelines, machine learning algorithms, and a unified software platform. Their thesis, drawn from years of studying companies including Amazon, Microsoft, Ant Financial, Netflix, Fidelity, and Moderna, is that the AI factory is becoming the new operational foundation of business. It is the place where execution actually happens.

The language is precise. The new firm industrializes its approach to data, analytics, and artificial intelligence the same way classical manufacturing industrialized physical production a century ago. Three pillars define the factory: algorithms that make and influence decisions, data pipelines that feed those algorithms, and the software infrastructure that lets them scale.

When Iansiti and Lakhani study what separates AI-first firms from traditional ones, the answer is not better technology. It is a different operating architecture — one where decisions are made by integrated systems rather than negotiated across siloed departments. They observe that this architecture compounds three things at once: scale, scope, and learning. Traditional organizations cannot match it because their decision-making was never designed to be machine-readable in the first place. The argument has been endorsed by Satya Nadella, who calls it the playbook for becoming an AI-first company.

Now apply that lens to marketing strategy.

If the firm's operational core is increasingly an AI factory making decisions, what does the strategy layer feed into it? If your marketing strategy is a slide deck of SWOT findings, brand positioning narratives, and journey map illustrations, it cannot enter the factory. It has no encodable inputs, no structured outputs, no decision rules a machine can compute against. The factory's algorithms run on what they can read. Anything that isn't structured stays at the conference room door.

This is the deeper reason the standard marketing strategy tools have a structural problem in the AI era. They weren't designed to plug into a digital operating model. They were designed for a board meeting.

Why MCM was built for this

The Marketing Canvas Method was designed to be machine-readable from the beginning. Not as an afterthought. Every step produces structured outputs: coded dimensions, numerical scores, deterministic routing logic, quantified gaps, sequenced initiatives. The method speaks in numbers, codes, and decision trees. That's the language machines work in.

Four structural choices make the difference.

Numeric dimension IDs. Every concept in MCM has a code. 110 for Job To Be Done. 220 for Positioning. 420 for Experience. 530 for Media. An agent receiving the instruction "raise dimension 220 from −1 to +2 for an A1 Disruptive Newcomer in Acquisition mode" knows exactly what to act on. The instruction is unambiguous, machine-readable, and traceable back to the diagnosis that produced it. "Improve the brand" produces fourteen different attempts. The dimension instruction produces one.

Forced-choice scoring from −3 to +3. An agent can compute the gap between current and target on any dimension. It can rank actions by gap size weighted by archetype priority. It can flag scoring inconsistencies across cycles. None of this is possible with "we have a strong brand."

Deterministic archetype selection. M3 (Growth Curve) × M4 (Economic Value) × Step 2 Goal → one of nine archetypes. An agent runs the lookup. There's no interpretation step where two analysts could land in different places. Same inputs, same archetype, same Vital 8 priorities. Two teams on opposite sides of the world receive identical strategic routing.

A six-step sequential pipeline. Step 0's output is Step 1's input. Step 3's scores feed Step 4's action engine. Step 5 closes the loop with gate conditions. This is not coincidentally how AI agent pipelines work — it's the same engineering pattern, applied to strategy.

What the contrast actually means

When an agent is asked to "improve our marketing strategy," the standard toolbox produces a slide. MCM produces a state — a set of dimension scores, an archetype assignment, a Vital 8 priority list, and a roadmap with named gate conditions. The state is auditable, reproducible, and executable. The slide is none of those things.

That difference compounds as AI gets deeper into marketing operations. An agent running MCM across multiple cycles accumulates context no human memory can match. It knows that the Positioning (220) initiative in Cycle 1 moved the score from −2 to −1 in four months, but that the Experience (420) initiative took seven months for the same movement. It knows the team consistently overscores Emotions (320) by one point relative to external evidence. It surfaces those patterns the next time the team scores.

That kind of longitudinal discipline is impossible when the underlying method outputs unstructured opinion.

The standard tools weren't wrong. They were built for a different problem — one where a senior human was always the final translator between framework and action. That role isn't disappearing, but it's compressing. The strategies that survive will be the ones an agent can read, score, and act on without a human standing between the diagram and the execution.

The companies winning with AI in marketing are not the ones with the best models. They're the ones with the most precise instruction sets.

What a marketing operating system looks like

This is the gap I built the Marketing Canvas Method to close.

The method gives marketing what finance has had since the 1930s: an operating system. Not another tool. A complete sequence — vocabulary, diagnostic logic, archetype-based prioritization, and a roadmap that traces from segment selection to executable initiative.

Three pieces hold it together.

Twenty-four dimensions, organized into six meta-categories. Customers, Brand, Value Proposition, Journey, Conversation, Metrics. Every dimension has a numbered code — 110 for Job To Be Done, 220 for Positioning, 420 for Experience, 530 for Media, and so on. When someone says "we have a 420 problem," everyone in the room means the same thing. The vocabulary is fixed and precise.

Nine strategic archetypes. Disruptive Newcomer. Efficiency Machine. Brand Evangelist. Stagnant Leader. Pivot Pioneer. Value Harvester. Scale-Up Guardian. Niche Expert. Category Creator. Each archetype is selected by your growth curve, your competitive strategy, and your revenue lever. Each archetype names the eight dimensions that matter most for your situation — your "Vital 8" — so you stop spreading investment across dimensions that won't move the outcome.

Six steps, in sequence. From Lead Segment selection through Strategic Cycle Roadmap. Each step's output is the next step's input. There's no buffet. The order isn't optional.

The method doesn't reject the standard tools. It places them.

SWOT findings feed Step 1's strategic context map. Porter's Five Forces inform your competitive parameters (M5 and M6). The BCG Matrix sits inside Step 2's revenue ambition setting. The Brand Identity Prism informs Dimensions 220 (Positioning) and 240 (Visual Identity). Customer Journey Maps populate Dimensions 410 (Moments) and 420 (Experience). The Balanced Scorecard becomes part of Step 5's cycle measurement.

Each tool keeps its diagnostic value. None of them stays standalone. The operating system gives them a place to live and a sequence to live in.

The strategy test: five questions

You can run a quick check on your current strategy right now.

Pick any initiative on your roadmap for next quarter. Ask yourself five questions.

Which customer segment is this for? (Not "everyone who could benefit." One named segment.)

Which dimension is it intended to move? (Not "brand." Specifically: Positioning? Pricing? Influencers?)

What's the current score on that dimension, and what's the target?

Which archetype-defined priority does this dimension belong to — Fatal Brake, Primary Accelerator, Growth Driver, or none of the above?

If the initiative succeeds, what becomes possible that isn't possible now?

If you can answer all five with specifics, you have a strategy.

If you can only answer two or three, you have a tool that produced a recommendation. The recommendation may even be correct. But you can't prove it, you can't defend it under budget pressure, and you can't reproduce it next year when the inputs change.

A toolbox produces opinions. An operating system produces traceable decisions.

Where to start

If you've recognized your situation in this article — too many frameworks, not enough sequence — there are two ways in.

The fastest is the Marketing Canvas Quick Assessment: a short diagnostic that surfaces the dimensions where your current strategy is strongest and weakest, and points to the archetype most likely to fit your situation. Twelve minutes. No login.

The deeper way is the full method, available open-source: 24 dimensions, 9 archetypes, 6 steps, every scoring rule and selection matrix documented.

You don't need another tool. You need the system that connects the ones you already have.