Marketing strategy that works

in the real world.

Framework deep-dives, archetype guides, and research analysis built on the 24 dimensions of the Marketing Canvas Method.

Don't know your archetype yet?

12 minutes. 24 dimensions scored on a forced-choice scale. You get your Strategic Archetype, your Vital 8 against target, and your Fatal Brakes flagged — before you read anything else.

Get the scoring worksheets.

6 printable A4 scoring grids for running the MCM assessment with your team — no screen required.

No sales emails. GDPR-compliant. Unsubscribe any time.

Marketing Canvas - Step 2 - Set Your Goals

In the Marketing Canvas Process, after having finalised your assessment, you should discuss potential scenarios that will help you achieve your goal(s). An interesting perspective for this phase is to use the scenarios proposed by Tiffani Boffa in her book Growth IQ.

Marketing Canvas - Listening

Most companies listen reactively — processing complaints, running annual surveys, reading reviews when they arrive. The Marketing Canvas demands proactive listening. Dimension 510 explains the difference, why it is a Fatal Brake for Pivot Pioneers, and the most expensive sentence in marketing.

Marketing Canvas - Magic

Satisfaction keeps customers. Magic turns them into advocates. Dimension 440 of the Marketing Canvas scores four components — effortless, stress-free, sensory pleasure, and social pleasure — and explains why exceeding expectations on something the customer doesn't care about isn't magic, it's waste.

Marketing Canvas - Channels

Most companies have channels. Few have orchestrated channels. Dimension 430 of the Marketing Canvas scores the difference — and explains why a brand with three connected channels outperforms one with eight siloed ones.

Marketing Canvas - Experience

Experience is a Fatal Brake for three archetypes. In every case the mechanism is the same: experience failure is the proximate cause of churn. Dimension 420 of the Marketing Canvas scores consistency — not brilliance — and explains why "leaving nothing to chance" is a scored criterion, not an aspiration.

Marketing Canvas - Proof

Every brand makes claims. Few build proof systems. Dimension 340 of the Marketing Canvas identifies four types of proof — demonstration, logical explanation, endorsement, and reputation — and explains why stacking all four is the only way to convert sceptical prospects into convinced ones.

Marketing Canvas - Pricing

Pricing errors run in both directions. Underpricing signals low quality and leaves margin on the table. Overpricing creates resentment no feature list can fix. Dimension 330 of the Marketing Canvas scores whether your pricing actively supports your positioning — or quietly contradicts it.

Marketing Canvas - Emotions

Features bring customers in. Emotions keep them and make them advocate. Dimension 320 of the Marketing Canvas distinguishes between the emotional job customers want to feel in their lives and the emotional benefit your product actually delivers — and explains why B2B brands skip this distinction at their peril.

Marketing Canvas - Features

Having twenty features means nothing if none of them is the definitive reason to buy. Dimension 310 of the Marketing Canvas scores features on three levels — core, differentiating, unique — and explains why it appears in seven of the nine strategic archetypes.

Marketing Canvas - Visual Identity

Visual identity is the only Brand dimension customers score before any interaction begins. The first impression formed from a colour, a typeface, or a photography style is a scoring event — rapid and largely subconscious. Dimension 240 of the Marketing Canvas applies four tests to determine whether what customers see matches what the brand stands for.

Marketing Canvas - Values

Most brands have values on a wall. Very few have values that change decisions. Dimension 230 of the Marketing Canvas scores the difference — and the acid test is a single question: can you name a decision made in the last year because of a stated value, even when a different decision would have been more profitable?

How to Turn Sustainability Goals Into Real Marketing Strategy

Most sustainability briefs land without a number or a deadline. Here is how earlier-career marketers can turn a vague direction into a structured goal their team can actually execute.

Marketing Strategy for Millennials from Marketing Cloud

Interesting Infographic from Marketing Cloud proposing 5 steps to creating your Marketing Strategy for Millennials. As you might have noticed, I am advocating the use of the Marketing Canvas for designing your Marketing Strategy. Let's check whether these steps fit into the process?

How the Marketing Canvas Method Works: A Step-by-Step Guide

Six steps. One lead segment. A scored baseline, a ranked set of initiatives, and a 12-month roadmap with built-in gates. Here's the full MCM process — what happens at each step and why the sequence is non-negotiable.

Why you need a bold question for your Marketing Strategy?

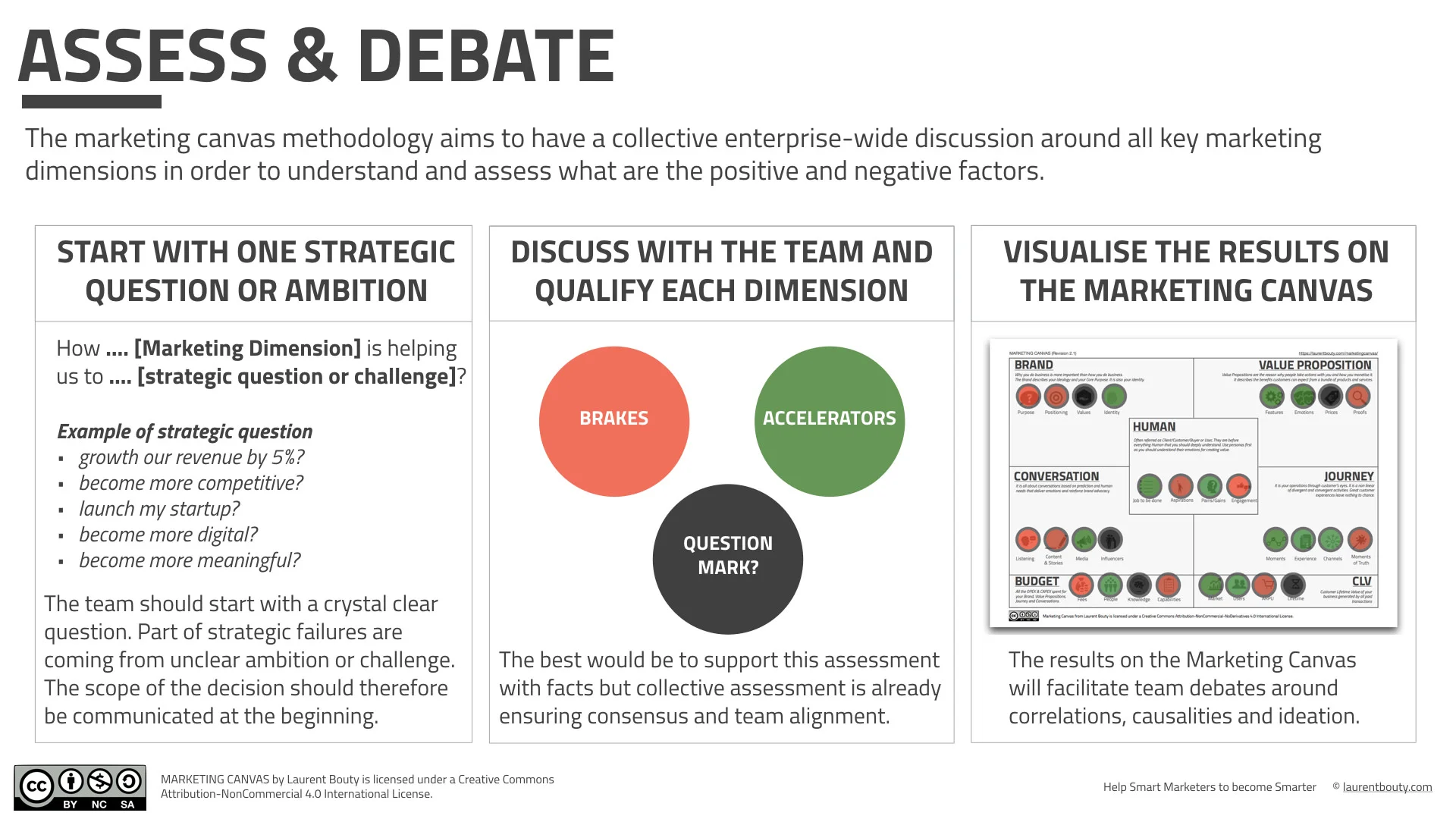

What is the best way to start defining the marketing strategy of your company, business or activity? My proposal is to start with a bold strategic question! Why?

How to Assess your Marketing Situation With the Marketing Canvas?

One clear objective of the Marketing Canvas is to facilitate debate and discussion around a clear strategic challenge question. Most of the specialists of the leadership topic agree that one of the key reason why strategy is failing is because the decision that has to be made is unclear! When you do the strategic marketing exercise, you need to be crystal clear about which question you are trying to answer.

AI is Changing Marketing

Excellent article from Andrew Stephen on Forbes about Artificial Intelligence and how it is changing Marketing. 4 main highlights (extracted from the article I invite you to read)